Birmingham City University – Vehicle crash test analysis

How long does it take to analyse a crash that only lasts a quarter of a second?

Thanks to technology developed by Birmingham City University, it’s a process that’s much shorter than it used to be.

Back in the mid-nineties -when it was known as the University of Central England – the university’s Digital Media Technology research group started working on a new system for reviewing multimedia footage of crashes. It quickly revolutionised the way vehicle companies analysed crash data, and even prompted a new international standard.

The research has assisted manufacturers in creating safer vehicles, and enabled faster, more intuitive and more insightful analysis of crash test data.

“It proved very popular with the major manufacturers”, said group leader Professor Cham Athwal. “And within a few years, everyone was bringing out copycat systems.”

“It proved very popular with the major manufacturers”, said group leader Professor Cham Athwal. “And within a few years, everyone was bringing out copycat systems.”

So why did it become so popular? To give you an idea of that, let’s look at the amount of equipment that is generally required for the average crash test experiment.

Car companies know that thorough research into crash safety saves lives. Therefore they are keen to get as much information from their experiments as possible. As the test vehicle charges toward its impact, it is typically recorded by around 12 high speed cameras, 40 vehicle transducers, and 60 transducers attached to the dummy to register injury. The crash is recorded with a number of still photographs, while measurements are taken of the vehicle pre-and-post-crash. The investigation sparks a whole raft of paperwork, from explanations to graphs, tables, analysis and conclusions.

Now imagine that all of this footage is shot on 16mm celluloid film, at 1,000 frames a second, and has to be reviewed meticulously on a special projector, frame-by-frame, from different angles.

Exhausted yet?

“Before our system, people would have to spend days looking through rolls of film, picking through data and drawing up graphs”, said Professor Athwal. “Now they can do the analysis in a day or half a day, and have the time to make better design modifications.”

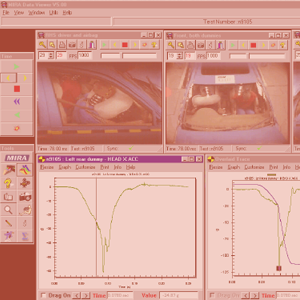

Working with the Motor Industry Research Association (MIRA), Professor Athwal’s team researched and developed a system that could blend multimedia such as video footage, stills and sensor data from multiple sources, and mesh it into a single chronological record of the crash.

Investigators could pinpoint an instant in a crash, and see information from different angles and sources, synced to that exact split-second.

“In the past, people would have lots of computer files to check, with data coming from all the sensors, as well as lots of paper and rolls of film”, said Professor Athwal. “We built a front-end system that allowed investigators to see all of the angles based on the specific time. You could then step through each of the videos and datasets and see what was happening at a certain point.”

The system – which was made possible through funding from the Technology Strategy Board and EPSRC – reduced analysis time from several weeks to a matter of hours. It also allowed investigators to compare the test frame-by-frame against a previously-completed simulation, and assess whether the simulation was calibrated correctly or not.

“It allowed companies to make better design decisions more quickly, and dramatically shorten their design cycles”, said Professor Athwal. “Plus, it helped to reduce injuries and prevent fatalities.”

A pair of products were launched: DataBuilder structured and stored crash data, while DataViewer allowed companies to analyse it using multimedia. MIRA began using the technology in-house, and noticed significant improvements in efficiency and accuracy.

A pair of products were launched: DataBuilder structured and stored crash data, while DataViewer allowed companies to analyse it using multimedia. MIRA began using the technology in-house, and noticed significant improvements in efficiency and accuracy.

People sat up and paid attention. The prototype project won the Teaching Company Scheme’s Best Technology Transfer Programme award in 1999. It emerged into the market in 2003, and before long major national crash test facilities were using the system, from European standard bearers such as Millbrook Proving Ground in Bedford, to international car giants such as Ford and General Motors.

It also had a lasting effect on how the industry worked. The team’s research was incorporated into the international standard for crash data, and remains there to this day.

Considering the level of industry interest, a spin-out startup seemed inevitable. In the mid-2000s, research associate Jimmy Robinson set up Pixoft to work with companies and refine the research further. This fine-tuning resulted in the 2006 release of Vicasso, a market-leading product that is now used by prominent names such as Jaguar Land Rover and General Motors. It has also found markets outside the car industry, with clients including Duracell, Unilever and the US Army.

MIRA was also finding other uses for the system. It has been used for rail and plane safety, even down to studying how seats react to a collision. It has even been adapted to gauge how buildings and structures might respond to impact, from Westminster to Heathrow Terminal 5.

“The system itself is very flexible”, said Professor Athwal. “It was originally built with car crash testing in mind, but it has been useful for all sorts of engineering and scientific investigations where data and video are recorded together.

“We have had people use it to measure how bubbles form in biological systems, and to conduct spray analysis. One example we gave in our paper was analyzing astronomical data from solar flares, which involves looking at videos of solar flares and synchronising readings of radiation on the earth’s surface.”

Meanwhile the team’s work on digital media systems continues, including some projects that wouldn’t look out of place in a sci-fi blockbuster movie.

“At the moment, we’re looking at virtual TV systems that are interactive. We are researching the creation of scenes that show virtual objects that a person can grab and move around in real-time, like Iron Man in the movies.

“Imagine using something like this for consultations. A medical professional could show you a 3D projection of your knee, created from your latest CT scans. They could show you how it fits together and point out exactly what’s wrong with your knee by grabbing the model and manipulating it in their hands. The doctor would see it on the screen in front of him, while the patient at the other end of the Skype call sees it as if it was an actual object that was being held and moved about.

“That would certainly be a more intuitive solution.”

Links to Additional Information

Digital Image and Video Processing Group BCU

http://cs-academic-impact.uk/crash-testing-vehicles/Case StudyLeave a Reply

You must be logged in to post a comment.