University of Brighton – Dictionary Production

Language changes all the time, which makes the job of a dictionary particularly tricky.

Keeping track of new meanings and new words is a huge task for a human, which is why many major dictionary makers have switched to a computer system built on research undertaken at the University of Brighton.

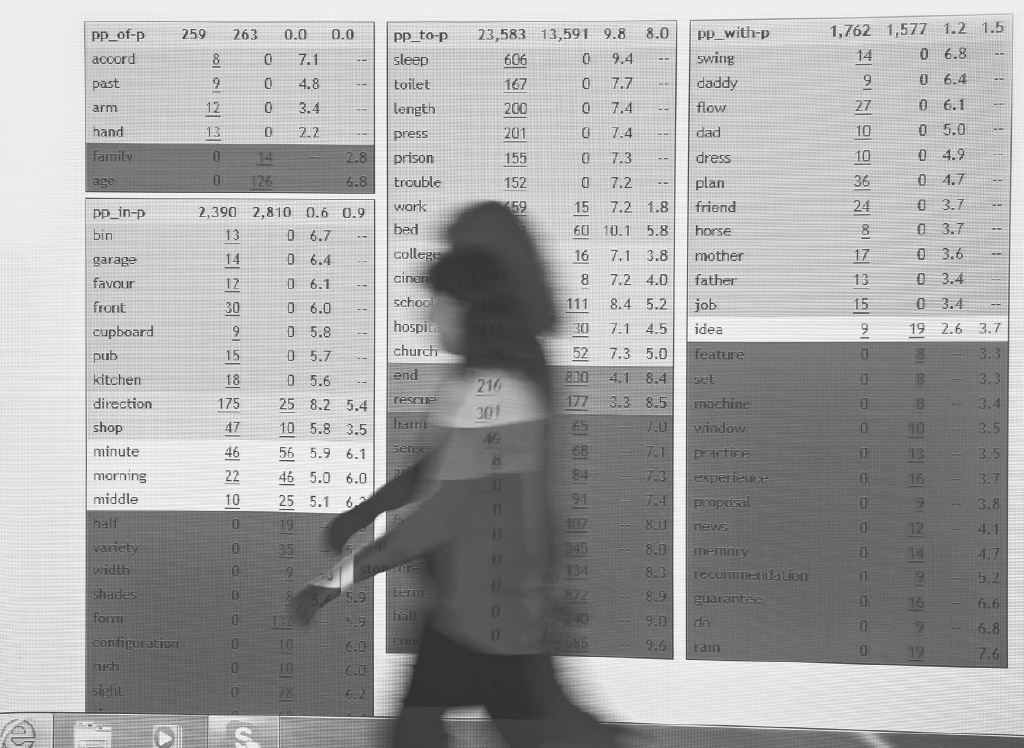

The Sketch Engine is a system for people who want to get a data-driven idea of how words behave. It emerged from Brighton’s research into computational lexicography, which has been a focus for the university since Roger Evans arrived in 1993. With EPSRC research funding, Evans brought in a research fellow called Adam Kilgarriff, who was the main instigator in creating a tool that has changed the way that dictionaries are compiled around the world.

“We started off thinking about how you build resources that you can use for computerised language processing systems”, says Evans. “To do that, you need a lot of information about how words behave, so we started looking at dictionaries.

“What was going on there was more interesting and challenging than we expected. We ended up supporting the dictionary-making process rather than drawing from existing dictionaries. There was a definite progression from building computer systems to creating tools for production.

“We developed a tool that collects a lot of examples of language use and uses them to produce something that is effectively a draft dictionary of words and meanings that a lexicographer can work with.”

To produce a dictionary, you need a large collection of language, which will tell you how a word is used, how often it appears and where. Dictionary-makers take advantage of a large repository of sentences and literature known as a Corpus, which contains millions of words. For example, the British National Corpus offers 100 million words drawn from literature and spoken conversation, providing a valuable idea of how contemporary language is used.

Previously, words would be picked out by poring through these corpora (the plural of ‘corpus’) and jotting them down on cards as they appear, then reviewing the context in which they appear. Definitions often would be based on these manually-collected examples, combined with the experience of the compiler.

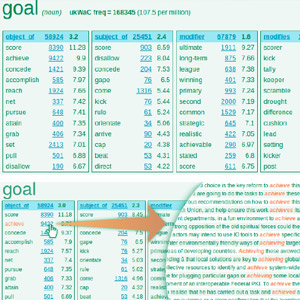

Kilgarriff argued that the sense of a word needed to be based on its usage, and that the best way to approach the problem was to trawl through the entirety of the evidence and group word occurrences with similar meanings.

“The thing I did that no one had done before, was to base the analysis of corpus data on grammar rather than just word-spotting”, said Kilgarriff. “This took it into the realm of computational linguistics.”

Working alongside research fellow David Tugwell with funding from EPSRC, Kilgarriff developed the idea of “word sketches”, which present a word in the context of how it behaves grammatically and in conversation. This has changed the way in which lexicographers explore and analyse word usage.

Kilgarriff says: “The first version of word sketching started as a small research project for Macmillan, who had been in discussions on how to improve their corpus use. It got very good reviews as it sped up their processing, made it more objective and helped them to include things that other dictionaries might have missed.”

This research was at the heart of spin-out company Lexical Computing, which Kilgarriff formed in 2003. Its flagship technology – The Sketch Engine – enabled commercial lexicographers and dictionary makers to quickly analyse large amounts of evolving examples of language. It was a breakthrough that was particularly interesting to dictionary makers who were just getting to grips with digital publishing.

Macmillan had used the pre-Sketch-Engine word sketches, but Oxford University Press was the first publisher to adopt the Sketch Engine in its entirety in 2004. It has been using it ever since.

Oxford University Press say it has helped them to create a detailed profile of a word within seconds, hugely bolstering their in-house research. It has also been adopted by Collins and Cambridge University Press in the UK, as well as commercial publishers worldwide, such as Shogakukan in Japan, Le Robert in France, and National Language Institutes in Bulgaria, the Czech Republic, Estonia, Ireland, the Netherlands, Slovakia and Slovenia.

Lexical Computing’s tool allows users to get information on between 30 million and 15 billion words in a variety of languages. That has potential for other organisations as well.

Lexical Computing’s tool allows users to get information on between 30 million and 15 billion words in a variety of languages. That has potential for other organisations as well.

For example, the company sees huge potential in using the approach to help people get to grips with collocations in the English language.

“If your native language is not English, how would you know that we make mistakes but take care, have sex but make love?” says Kilgarriff.

Every year, Kilgarriff and his colleagues deliver an intensive, week-long training course called Lexicom, which attracts publishers, universities and government agencies. Organisations across the world have noted its usefulness for professional training and language teaching.

Artist David Levine and PhD student Alix Rule used the Sketch Engine to pick through thousands of emails from art-world email service e-flux, identifying which words and phrases came up more in “International Art English” than in everyday spoken English. They noticed a tendency towards longer sentences, and a significant upswing in uses of words and phrases such as “multitude”, “the real world” and “the void”.

It is also being used to analyse the language that children use, in collaboration with Oxford University Press and the BBC.

“There are lots of different companies using it in slightly different ways”, says Kilgarriff. “Some hi-tech companies see it as an effective tool to help them find a good name for their business. Others want it for translation, language teaching or linguistics research.

“Dictionary companies are our biggest customers, but they represent less than half of company income.”

The techniques and technology explored at Brighton have already changed the nature of lexicographical study and resulted in a successful company. So what’s next?

“We’ve talked about computers that are capable of advanced language processing, but the way that we use language is hugely adaptive and context dependent”, says Evans. “We can get computers to deal with one style and genre but that’s not the way that people talk. We want them to be able to switch between styles in the way that people do, to answer one question in a technical way and then to talk about the weather or the football in the same conversation.

“If we can crack the single-style problem, which hasn’t been done yet, we can create systems with the adaptability and context sensitivity to unlock a whole range of more natural language applications.”

Links to Additional Information

Word Sketches at the University of Brighton

http://cs-academic-impact.uk/dictionary-publishers/Case Study